Most businesses shopping for an AI agent platform in 2026 will make the same mistake: they will evaluate demos instead of architectures. They will watch a polished chatbot answer a scripted question, get impressed, and sign a contract, only to discover six months later that the system cannot access live order data, cannot escalate intelligently, and cannot handle anything outside a narrow FAQ boundary. That is not a buyer problem. That is a market that has not been honest about what "agentic" actually requires.

This guide is built for operators who want to avoid that outcome. It will walk you through how to evaluate an AI agent platform correctly, what separates genuine agentic capability from a rules engine wearing a language model costume, and where most deployments collapse in production. Steps AI Agentic Chatbot is built specifically to handle the architectural gaps this guide exposes, and it will be referenced throughout as the practical benchmark.

By the end, you will know exactly what to ask, what to test, and what to walk away from.

Why Most AI Agent Platforms Fail Before You Go Live

The failure usually happens at the integration layer, not the AI layer. A platform can have impressive natural language understanding and still be completely useless if it cannot connect to your CRM, your order management system, or your internal knowledge base in real time. Most vendors solve this by pre-ingesting static data. They pull a snapshot of your documentation, embed it, and call it "connected." The moment a customer asks about their live shipment status or a recently updated policy, the system either halts or hallucinates.

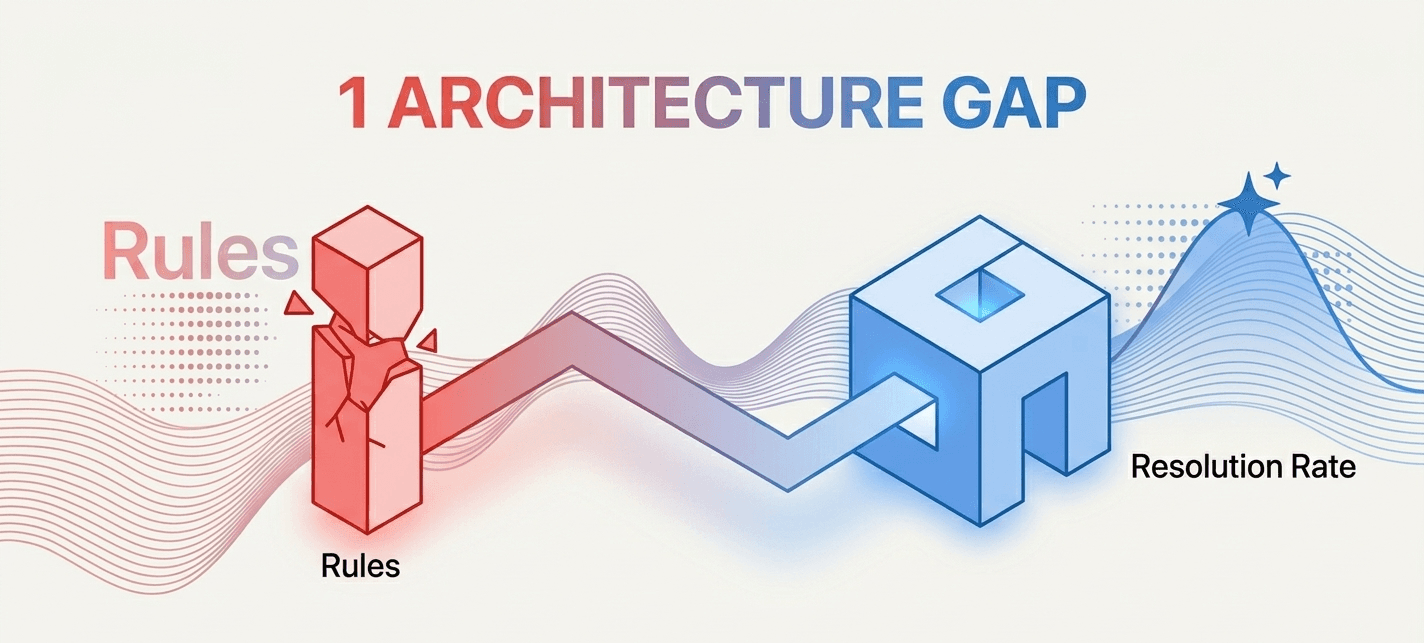

The core issue is architecture. Rule-based chatbots disguised as AI agents are still widespread in 2026. They use large language models for language generation but rely on brittle decision trees for logic. They look capable in controlled environments and break under real user behavior. Real agentic systems plan, retrieve live data, decide between tools, and take actions, not just generate text.

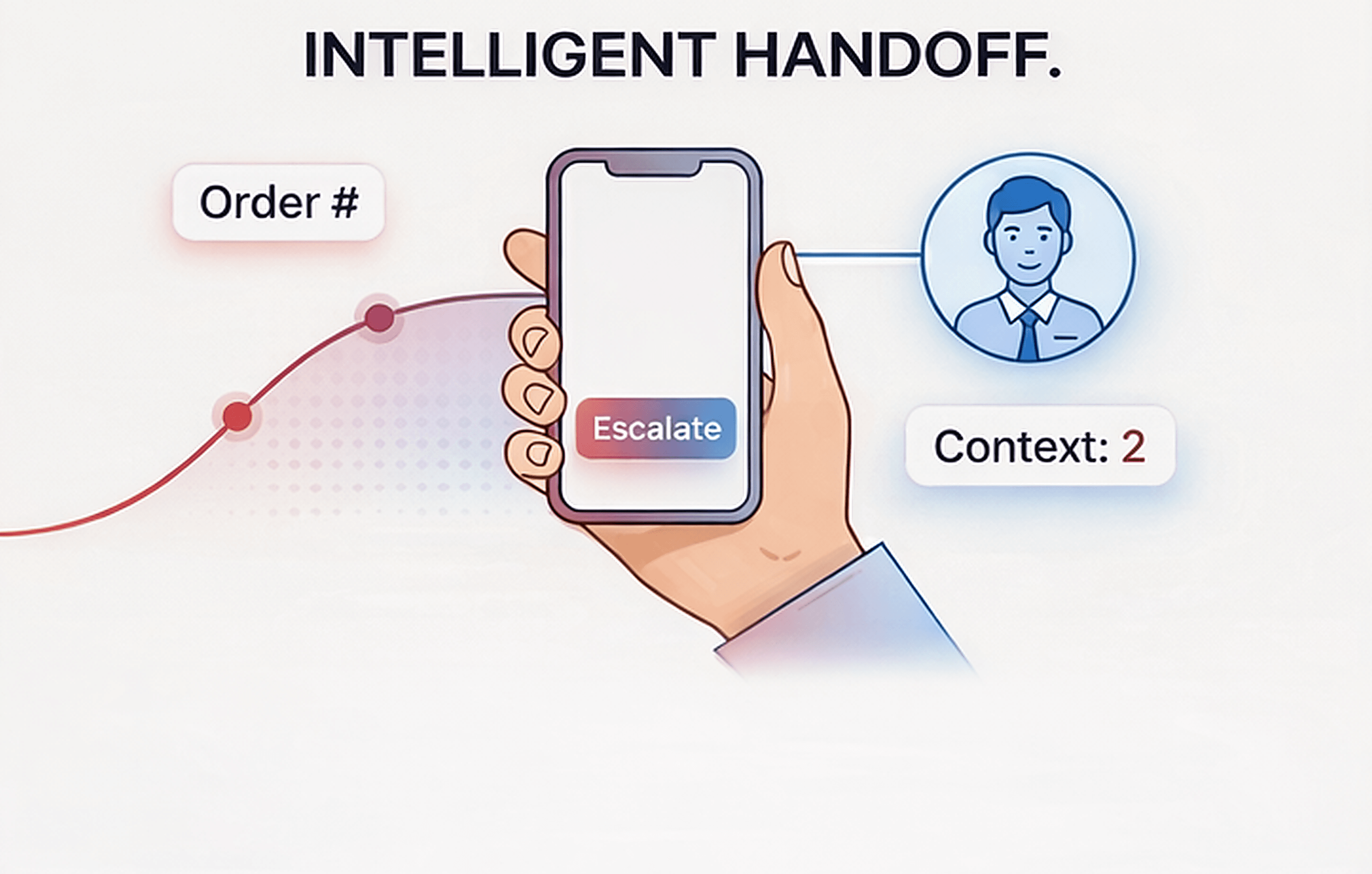

Most buyers also underestimate the escalation problem. A chatbot that cannot escalate gracefully to a human agent at the right moment is not a support asset. It is a friction point. When a chatbot on your website hurts customer experience, it is almost always because escalation was designed as an afterthought. The system keeps looping users through dead-end responses instead of routing them to the correct person with context already transferred.

Do not let any vendor skip the escalation design question during your evaluation.

What "Agentic" Actually Means for a Website AI Agent Platform

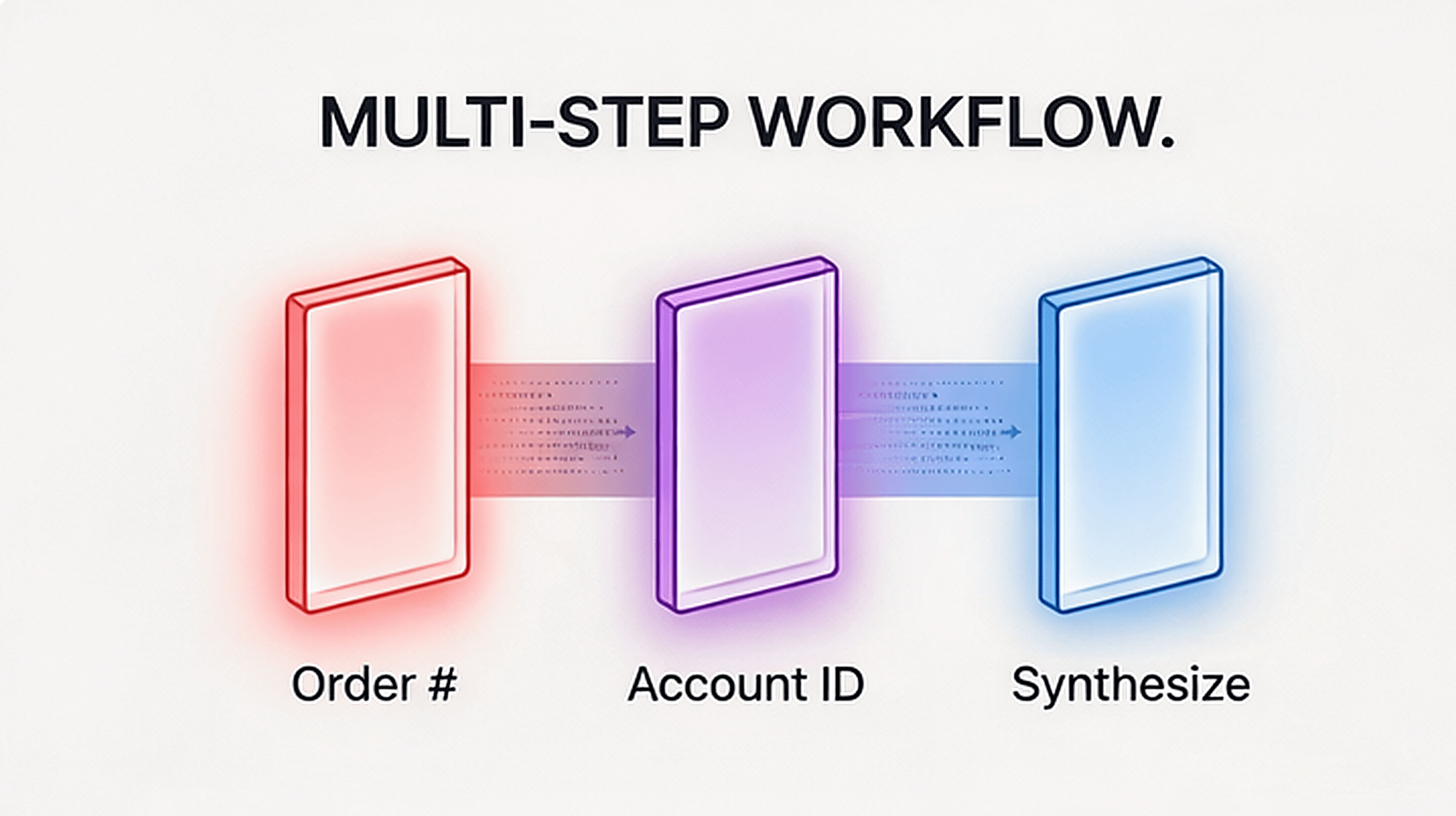

The word "agentic" has been stretched beyond recognition by marketing teams. Here is the practical definition that matters for a website deployment: an agentic system can break a user's request into subtasks, choose the right tool or data source for each subtask, execute those steps in sequence, and synthesize a final response, without a human scripting every branch.

That is meaningfully different from a system that matches user intent to a pre-written answer. Agentic behavior requires tool use, memory, and multi-step reasoning. A visitor asking "Can I change my subscription plan and get a prorated refund for the remaining days?" is issuing a multi-step request. A non-agentic system gives a generic policy answer. An agentic system checks the account, calculates the proration, confirms eligibility, and either executes the change or routes to the right team with all context attached.

This distinction matters enormously for ROI. A non-agentic chatbot can deflect simple FAQs. An agentic system can resolve complex requests end to end. The former reduces a fraction of your support volume. The latter changes the economics of your support operation entirely. Understanding what makes a website chatbot effective starts with this distinction, because teams that do not get it spend money on the wrong tier of product.

When evaluating any AI agent platform, demand a live demonstration of multi-step task completion using your actual data, not a curated demo dataset.

How to Evaluate an AI Agent Platform the Right Way

Start with your five hardest support scenarios, not your five easiest. Most vendors will show you their best case. Your job is to force the worst case. Pull five real tickets from the last 90 days that required back-and-forth, data lookups, policy judgment, and escalation. Run those through any platform you evaluate. The performance gap between genuine agentic systems and glorified FAQ bots becomes immediately visible.

Here is a practical evaluation framework:

Integration depth. Can the platform connect to your live systems, not just your documentation? Test a query that requires real-time data retrieval. If the answer comes from a static knowledge base when it should come from a live API, that is a disqualifying gap for most businesses.

Reasoning transparency. Can you see how the system reached its answer? A platform that gives you no visibility into its reasoning chain is impossible to debug, audit, or improve. This is especially critical in regulated industries where you need to explain AI decisions.

Escalation design. Does the system hand off to humans with full context attached? Not just a transcript dump, but structured context: what the user asked, what the system tried, what data was retrieved, and why it could not resolve the issue. A poor escalation design is one of the primary reasons why website chatbots fail in production.

Fallback behavior. What does the system do when it does not know? Confident wrong answers are far more damaging than honest uncertainty. Test edge cases deliberately. A well-designed platform says "I do not have that information, let me connect you to someone who does" rather than fabricating a response.

Customization without engineering dependency. Can your team adjust conversation flows, update knowledge, and change routing rules without filing a ticket with the vendor? If every change requires a developer, your operational agility disappears.

Where Steps AI Agentic Chatbot Fits This Framework

Steps AI is built around the problems this guide has been describing, not around the demo scenarios most platforms optimize for. The architecture is genuinely agentic: it connects to live data sources, reasons across multiple steps, and executes actions rather than just generating responses.

The escalation design in Steps AI Agentic Chatbot is not an afterthought. It is a core architectural decision. When a conversation reaches a point where human judgment is required, the handoff includes full structured context, not a raw transcript. The receiving agent knows exactly what was tried, what data was accessed, and what the user actually needs. That changes the quality of the handoff and the speed of resolution on the human side.

Steps AI Chatbot is also built for operators, not just developers. Conversation flows can be adjusted, knowledge sources updated, and routing logic changed by the team managing the product without requiring engineering support for every iteration. That matters because chatbot performance degrades when it cannot be updated quickly in response to real usage patterns.

The honest disclaimer: no platform eliminates implementation risk entirely. Deploying any agentic system on a live website requires careful integration work, accurate knowledge sourcing, and disciplined testing before go-live. Steps AI reduces that risk through better defaults and clearer tooling, but it does not remove the operational discipline the deployment requires from your side.

For businesses thinking about support volume and ticket reduction specifically, the relationship between AI chatbots and reduced support tickets depends almost entirely on whether the system can resolve multi-step queries independently, which is exactly the capability gap this platform is designed to close.

The Deployment Mistakes That Kill ROI Before It Starts

Even the right AI agent platform fails when deployed incorrectly. The most common mistake is launching with incomplete knowledge coverage. Teams spend weeks on the platform selection and then rush the knowledge base construction. The chatbot goes live, encounters real user queries that fall outside the curated content, and starts either looping or fabricating. Users lose trust fast, and recovery is harder than getting it right initially.

The second most common mistake is launching without a defined escalation policy. Teams assume the AI will handle escalation naturally. It will not, unless escalation logic is explicitly designed: which query types trigger human routing, what agent queue they route to, what context gets transferred, and how response time SLAs are maintained during the handoff. These decisions need to be made before the platform goes live, not after the first complaint about a missed escalation.

The third mistake is measuring the wrong metric. Teams celebrate containment rate, meaning the percentage of conversations the AI handles without human intervention, without checking whether those contained conversations were actually resolved. A high containment rate built on evasive or incomplete answers is not a success metric. It is a customer satisfaction problem accumulating in the background. The right metric is resolution rate: did the user get what they came for?

The impact chatbots have on customer support is only positive when the system resolves real needs. Deflection is not resolution. Platforms that optimize for containment without resolution are selling you a vanity metric.

Conclusion

Choosing an AI agent platform in 2026 is not a UI decision or a feature checklist decision. It is an architecture decision. The question is whether the system you are buying can genuinely plan, retrieve live data, reason across steps, and escalate with intelligence, or whether it is a well-marketed FAQ engine that will plateau at low-complexity query handling.

Evaluate on your hardest cases. Test integration depth with your actual systems. Scrutinize escalation design before you sign anything. Measure resolution rate, not containment rate.

Steps AI Agentic Chatbot is built for operators who have seen the gap between what AI chatbots promise and what they deliver in production. The architecture addresses the failures this guide has outlined, and the tooling gives your team the operational control needed to improve performance after launch, not just at launch.

The right platform will make your support operation faster, smarter, and more scalable. The wrong one will frustrate your customers and stall your team. The difference is usually visible in the first serious evaluation test. Do not skip that test.

See how Steps AI Agentic Chatbot handles real-world website queries at stepsai.co

Frequently Asked Questions

What is an AI agent platform and how is it different from a regular chatbot?

An AI agent platform supports multi-step reasoning, live data retrieval, and autonomous task execution. A regular chatbot matches user input to pre-written responses. The practical difference is that an agentic system can resolve complex, multi-part requests independently, while a standard chatbot can only handle simple, single-intent queries within a scripted boundary.

What should I look for in an AI agent platform for my website?

Prioritize real integration depth with your live systems, transparent reasoning you can audit, intelligent escalation with context transfer, honest fallback behavior when the system does not know something, and operator-level controls that do not require engineering support for every update.

How do I test an AI agent platform before buying?

Use your five hardest real support tickets from the last 90 days. Run them through every platform you evaluate using your actual data, not the vendor's demo dataset. The gap between genuine agentic systems and rules-based bots becomes immediately visible under real-world stress testing.

What is a realistic timeline for deploying an AI agent platform on a website?

A production-ready deployment typically requires four to eight weeks for integration work, knowledge base construction, escalation design, and pre-launch testing. Rushing any of those phases is the most common reason AI chatbot deployments underperform in the first 90 days.

Why do AI agent platforms fail even after a successful demo?

Because demos are built on curated data and scripted scenarios. Production environments have messy, unpredictable queries, incomplete data coverage, and edge cases the vendor never anticipated. Platforms that are not architected for real-world complexity look capable in demos and collapse when real users arrive.